A SIFT Rebuild (in progress)

It's probably too important to keep my mouth shut until it is done

I presented yesterday at the Let’s Talk Science’s National Day of Learning, which is a lovely event in Canada. I shared some of my recent work, and I showed an edit of SIFT that I am initially excited about, but not sure I am going to adopt. But I thought I’d share it here.

What’s wrong with SIFT

Nothing is wrong with SIFT, really. If it’s working for you, continue to use it. But two trends have caused me to question the relative emphasis of its parts.

The core of SIFT is an understanding that there are three major ways to approach a claim that arrives on your doorstep.

First, you can look at the source. If the source is good — if you’re seeing information on a stock market plunge from Bloomberg — you’re probably fine. If you’re looking at minimum wage economic impacts from a site that’s an astroturf cutout for the restaurant industry, I’d say disregard that.

If you don’t like the source, you can find better coverage — forget about the source that landed in front of you and look at the claim by finding others on it. So you ditch the astroturf site, search “estimated impact of minimum wage,” get back a bunch of new sources, investigate those, and so on. As an alternate path (or an additional one), you can find out where the claim or artifact — a photo, a quote — came from, and try to trace what landed on your doorstep back to its original context.

It’s been a very durable model. The understanding that one easy way to think about these challenges is as a source-claim-source leapfrog has been valuable, as has the reduction of 26 different questions to three big buckets: What do people say about the source? What do people say about the claim? And where did the claim come from anyway?

All of that still works, but two things have changed that make me think the source-claim emphasis needs to be rejiggered.

The sharing source is now worthless, and the reporting source obscured

When SIFT debuted in 2017 we lived in an environment where the sharing source, the source that reached you, was often a useful indicator of quality, and the reporting source (e.g. a link to a website) was transparent enough to see immediately. People's feeds were a mix of news sources and personal follows. Tweets and shares promoted the sites and organizations behind them, which could then be evaluated.

Maybe you saw something directly from the Chicago Tribune and asked yourself, “Is that a major Chicago paper?” Maybe you saw something from a site like Last Line of Defense and asked, “What site is that?” Even if you didn’t see the site directly in your feed, the source was visible, because we still lived in a world of links. Maybe your mom shared something from the National Holocaust Museum, which has more than a little expertise in the Holocaust. Or maybe she shared a link from a Holocaust denial site, which does not. You’d use the trace move and evaluate that source.

That world is mostly gone. Pockets remain — LinkedIn, for all its faults, still lets you follow and evaluate domain experts, and Bluesky still rewards link-sharing.

Most places, that version of the web is dead. The slide began when Musk demonstrated you could tweak the algorithm to demote tweets that link to outside sources, and no one could do much about it. Demoting links keeps people on your platform, and almost all the platforms do it now — LinkedIn, Facebook, all of them. That lack of a tether to an outside world changes who gets views on platforms. Increasingly the people in your feed are what I've called news brokers — not reporters bringing expertise into a platform but influencers repackaging others' reporting or media as their own.

This in turn has created a social web strangely devoid of institutions and organizations to evaluate. With each person’s business model relying on building a personal following on a platform, it is, as the turtle cosmology story goes, influencers all the way down.

Take the October 7 massacre in 2023 and what news about it looked like on Twitter. Back then, I led a team of researchers studying how people were receiving news on this event.

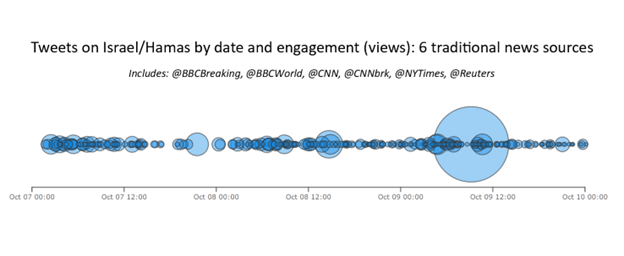

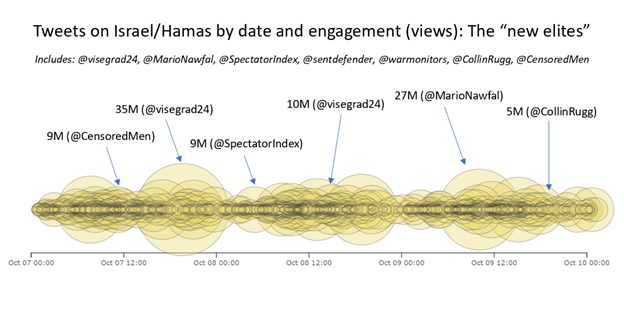

Over a three-day period, topic-relevant tweets from the six most highly subscribed news sources — sources with external sites and reputations — accumulated 112 million views across 298 tweets, an average of 376K per tweet. We stopped at six because after that we couldn’t find anything else that was even registering.

However, topic-relevant tweets from the top seven Twitter-only accounts — almost all sharing unsourced information, none with a traditional web presence — accumulated 1.6 billion views across 1,834 tweets.

That’s billion with a b.

As dark as this is, it understates exposure to Twitter-only news “influencers” versus sourced news sites. First, the biggest blue bubble up top in that graph was largely due to people hate-tweeting a post. Without that post, the figures are far worse. Second, this was 2023. The situation has since deteriorated.

In 2016 we were worried about fake news sites on social media. Well, that problem is solved in a way: news sites have largely disappeared from social media altogether.

Information doesn’t actually want to be free

The demise of the website on social media wasn’t just due to the greediness of platform operators not wanting you to leave their site. It’s also because there are very few free sources of information on the web anymore.

When I started this journey back in 2010, a blog meant Blogger, and the cool new kid on the block was WordPress. These were free sites anyone could access. What is blogging now? It’s Substack, where you get the first couple paragraphs free and then hit the paywall.

When I started, every paper I can remember allowed at least five free articles a month. It was easy to have students confirm an event, because even if it happened in some obscure Nebraska town you could get that article free. Now that paper is probably dead or spam, but if it’s not, you’re going to have to pull out your credit card for it. You can tell your students, “Hey, the New York Times is pretty reliable, look at that” — but what does it matter if when the research urge strikes them it immediately becomes a purchase decision? You can show them multiple times how to evaluate a website they come upon, but what does it matter if they rarely encounter websites?

People often comment about how web search has deteriorated. Search companies bear some blame for that, but the piece no one wants to admit is there is very little free to link to anymore. Web search was never supposed to be a payment portal to a bunch of subscription gated content. It was supposed to be an index of the information freely available to the user. And that part of the internet has significantly contracted; everything is gated now, in a walled garden or behind a paywall. There is little to put in a search result because there is less information available to people than ten years ago.

Short form video deals the death blow

That element — the death of the linked, free source — has been a slow decline. But the shift to short-form video on platforms like TikTok has been quicker and more dramatic. TikTok spreads claims all the time. Sources, not so much. If you do see something from a newspaper, it’s as likely to be a video of them doing a skit explaining the news as actual reporting. And compared to video of a random person pointing to blurry photos or unsourced “footage from nowhere,” you’re going to see very little of those explainer skits anyway.

All of this is to say that — at least when evidence or news arrives at your doorstep — there is very little point in checking the source of it. The source isn’t being shared. That’s because algorithms demote it, or because there’s less point in linking to paywalled sources, or because formats like TikTok don’t work well with sourcing. The people you see in your feeds are no longer institutions or people linked to institutions. We can show you how to tell if that TikTok video turns out to be from a Republican organization or a Democratic one, but that’s not what you’re going to see — you’re going to see a mix of random people getting their 15 minutes and influencers with no institutional credibility to investigate. If they’re a big enough personality, maybe they have an assessable public profile, but even then, what are you doing exactly? Assessing the reliability of a TikTok influencer?

Everything is “fact-checking the mailman” now

This is all to say that if you see someone waving their hands toward a green-screened map telling you the AI economy is about to collapse because of a Strait of Hormuz closure and impending helium crunch, not a second should be spent asking who this person is or what that image behind them shows. Just immediately get to finding out whether the Strait of Hormuz is actually going to cause a helium-induced chip shortage.

In my first book back in 2017, I had a metaphor called “fact-checking the mailman,” an explanation of the “trace” move. It went like this: you get a flyer from an unknown sender. You open it and it tells you, among other things, that the oil industry is having a record year of profits. You run after the mailman and ask, “Excuse me, how much background do you have in oil industry economics? Do you have an editorial process? If not, why should I trust this flyer?”

That makes no sense, right? That dude is the mailman. He carried the mail to you. He’s a delivery mechanism, not a source of authority.

What I’m saying is that nearly everything is fact-checking the mail carrier now. There are exceptions, but online the rupture between reporting and research sources on one hand and presenting sources on the other has become so complete that I don’t think it’s useful to tell people to first figure out whether to check the claim or the source. Given the nature of the sources that reach people, you just start with the claim, every time.

That doesn’t mean sources don’t matter — they do, especially at later stages where students need to trace claims to reliable websites. But the idea that students will stumble on an unknown blog that turns out to be either a respected health research group or a crank outfit is just not the reality anymore. If they go on TikTok or X, they’ll hit more bogus health claims than ever, but those claims won’t come with sources to evaluate.

The old web is dead. I don’t want to be like the early promoters of CRAAP, who found something that worked for some years in the early 2000s and never looked at how the environment evolved. I also don’t want to debase what is probably my greatest professional achievement by chasing gimmicks or indulging whims. But the web of lateral links this approach was born in has shifted, and I fail to adjust to that at my peril.

AI becomes the tissue of the web

Links exist, of course, and are still important, even if we have — I think regrettably — moved from a world where we share and promote links like we used to. I’m still a link sharer, and if you’re reading this you likely are too. After all, you’re reading a blog.

My sense of the future, though, is this: most of the links you encounter won’t be shared with you and won’t be from one site to another. Most links (excepting internal links on sites) will be encountered via interaction with an LLM.

I say “the future,” but I mean two years, not ten. It’s already happening. AI Overview in Google is an LLM layer with links. ChatGPT is increasingly where people go to get their questions answered.

Links in other contexts aren’t going away. Journal articles will link to journal articles. Blogs will link to blogs. People will share gift links to the Atlantic. But I believe that most links people encounter are going to come from an LLM, and certainly from an AI rather than from an individual sharing a link or an author providing one.

I think that is sad! It is a cultural loss of staggering proportions and I am barely out of my denial phase of grief. But I have also always believed you design educational methodologies that match the task environment, and that requires addressing the world we see.

If the links you have come from the LLM, then a big part of your practice is going to be getting better links out of it to start, rather than checking every link — just as a big part of getting good results from search is feeding it the right query.

If you want to imagine this all: the linkiness of the web will survive, but by and large it is going to be mediated by hybrid LLM-search technologies. That fact is significant!

What doesn’t change

The fundamental insight of SIFT was that there are three basic questions you can ask, and there are technical moves associated with each.

You can ask what other people know about the source. (I)

You can ask what other people know about the claim. (F)

You can ask if the presentation of the source material or artifact — a photo, a quote, a video — is accurate when traced back to the source. (T)

SIFT replaced long lists of very specific questions, because what we realized is that students are for the most part pretty good (not perfect, but OK) at recognizing what a good source is when they get information on it, and OK at recognizing what a strong claim looks like when they get information on it. Most of what we thought of as students having critical thinking problems was actually that students were not getting enough information to sit with before thinking about things. Not always, but a lot. What our research showed was that giving students concrete methods to forage a bit more information on these things beat out traditional media literacy training about what makes a good source, what propaganda looks like, and so on.

I’m going to repeat this too much here. I’m going to belabor the heck out of it, actually. Because it’s really core to grasp what was unique about SIFT and lateral reading methodologies like DIG’s Civic Online Reasoning.

Imagine someone says that leftist agitators set fire to a warehouse. One group of people asks questions like “Does this have emotional language? Does this seem like propaganda?” Another group has training on what makes a good source — things to look for that might indicate quality, like the presence of an editor or particular funding models. A third group — the SIFT group — is not trained in “things to think about” at all. Instead they have developed some quick habits (a couple minutes, honestly) that allow them to get a bit more information on the source of the information, what people know about the warehouse and the fire, and whether the way quotes about it are being presented is consistent with what they look like when you look at them directly.

It all seems obvious now, I think, that the third group will do the best. Let’s say they look for more information and find there actually is verified video of a protester dousing the place with lighter fluid and lighting it up — they are going to immediately recognize that the report may have some basis to it. Or let’s say they look and find there is a history of faulty wiring issues in this building that were called out for months before it happened. They are going to see the presentation of this as arson as propaganda.

Once students have more information in front of them, they are actually far more adept at thinking than it first appears. As I’ve said before, the problem was not in students’ critical thinking — it was in the doing before the thinking that students were getting stuck.

Again, this is obvious now. At the time SIFT was rolled out in the mid-2010s it was anything but. Older media literacy and information literacy techniques assumed information scarcity; in these models it was assumed to be harder to get more information than to ask set questions about something you were already looking at. And in a previous world that would have been the case. If you got a chain email in 1995 about an event, there’d be precious little information you could gather about it. If you weren’t hopping in your car and heading to the library, you’d ask yourself very good questions like “Does this sound like propaganda?” and “Does this cite sources or is it word of mouth?” and “Does this mesh with my intuitions?” — and that would have to be it for the day.

SIFT was never just about what the letters stood for. It was concrete techniques welded to those lenses of source, claim, and original context. It annoyed me to no end when some researchers (who will remain unnamed) would do experiments where they would show something like the famous SIFT graphic to people for a couple minutes, or show someone how to search for a few minutes, and then try to measure results. Of course that won’t work. The best SIFT interventions I’ve seen involved about seven hours of instruction, with a lot of practice. I’ve seen good impacts from two 45-minute sessions, though not as strong. But at heart it’s an educational program, not a psychological experiment like ones on priming or nudges. You do have to teach! You have to show people how to pull query terms from a headline, how to search Wikipedia for background on an organization. And you’ve got to do it as practice. But if you paired the lenses of source, claim, and original context with these skills, you did well.

A very drafty version

Here is my current thought on a revision, which I wish I had the resources to test. By putting it up here in draft form, maybe someone will be inspired to help develop new university-level curricular materials that could be tested. It’s partially based on work I did with Google last year on updating some information literacy material, but whereas that was a bit of a bolt-on, this is a deeper cut into the core.

Let me show you where I am with it and then talk it through. To the extent that I have the power to announce “official” reworkings of SIFT, this is not that. This is what I would like to test in the classroom.

Here again is a draft of the moves, without the mapping to techniques yet.

Above it, not in it (SIFT for AI)

Stop and get it in. Get the claim into the LLM in the fastest, most convenient way. Don’t worry about the perfect prompt — you’ll sculpt it afterwards. The first step is the hardest part of the journey.

Investigate the evidence. Ask evidence-focused follow-ups. Gather and categorize important context. Get organized and sourced information, not answers.

Find better sources. Request sources suited to the task. Do you want the most academic source? The most recent? Ask for information about sources you do not recognize.

Track it down. Click the links. Hover. Visit the sources cited, and verify they have been presented accurately. Get out of the LLM to check the LLM.

What changed?

The biggest change is that we’ve moved most of source research into the “Find better sources” move. This isn’t demoting its importance, but stressing that most source verification will be about verifying sources provided by the LLM, not tracking down the sharing source on social media. You see a TikTok video suggesting a structure in the Grand Canyon looks manmade, or something claiming the drought in Europe is so bad that sunken battleships are reappearing. Can you trust that source?

This is the hard part where we mourn the web of the past, but the answer is: who cares? Yes, sometimes the source will be a real expert, especially on a link-focused platform like Bluesky. Sometimes it will be a trusted intermediary like Hank Green, an influencer known for taking care with his facts.

Most of the time, though, the person bringing it to you is just the mailman.

This allows space for the next change. Find better coverage was originally conceived as being about both finding better sources and finding out what other people said about the claim. In a search engine those are close to the same thing — you find out what others said by finding other sources. “Coverage” was a carefully chosen word to stand in for both issues.

LLMs split that ability in two. From within the LLM, we can start to see what other people have said about a claim without first discovering new sources. This is a major shift. Even if we still want students to venture out into sources, the fact that they can refine their understanding independent of new sources must be accounted for.

The proposal is therefore to split the actions within an LLM-search hybrid system into two: a claim-focused activity, where follow-ups collect and organize knowledge about the claim, and a source-focused move where effort goes toward finding more suitable sources and learning more about them. In my vision, the student often does a bit of both before a Track it Down move where they verify that whatever they’re seeing sourced is represented correctly by the hybrid interface or the sharing source.

This is only a DRAFT

It needs a curriculum and materials around it, and I need to pull in the mapping to techniques I have done in workshops like this. But it’s a start.

Note: This work partially builds on and extends some work done over the summer of 2025 funded by Google to extend their SIFT-based Super Searchers materials to cover the new Google interfaces. If you think that has influenced my approach you really don’t know how stubborn I am about this particular topic. I am about as intensely protective about the purity of SIFT as one could get; it is my legacy. If anything, I have delayed an update too long.

This fits with how I’ve fumbled my way to finding better sources using LLMs. I’m grateful for your work!